Unleashing the full potential of AI through Cloud Migration

Artificial Intelligence (AI) is no longer a nice to have or something that companies are aspiring to get to with their data strategies. AI has arrived and is rapidly disrupting the business landscape. In order to stay competitive, companies must be in a position to leverage AI technologies. As companies embrace the cloud, they can […]

Why should you migrate to cloud?

Migrating to the cloud can be a daunting task for any organisation. There are a variety of factors to consider, including which applications to move, how to move them, and what type of cloud platform to use. Cloud migrations are becoming increasingly important as more businesses move to the cloud. There are many reasons for […]

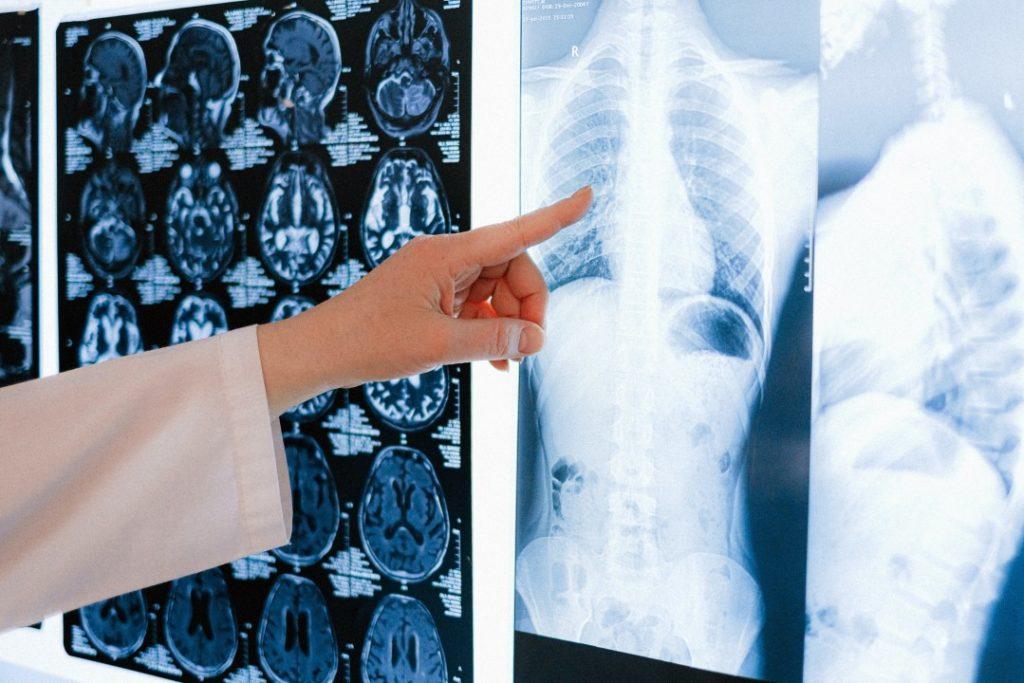

Chest X-Ray Triage using Artificial Intelligence Solutions

As a long term partner of IntelliHQ, a Queensland AI Hub consortium member, the TechConnect team was alerted to the Queensland AI Hub Medical datathon to be held over 2 weeks in June 2020. TechConnect is an AWS advanced consulting partner with a data science team whose experience spans from beginner to expert. As such, […]

Gold Coast Excellence Awards 2020

The Gold Coast Business Excellence Awards launched in 1996 and in 2020 are celebrating our 25th year! During this time the Awards have grown to be recognised as the region’s most comprehensive and prestigious business awards scheme, offering specific and meaningful benefits to the wider Gold Coast business community. TechConnect is super proud to support […]

Digital Transformation with Data

Harnessing Data to drive effective digital transformation The COVID-19 pandemic has made clear that businesses need to be prepared for flexible, remote working practices. As lockdowns forced offices to close and people headed home to limit the potential spread of the virus, many organisations found they weren’t prepared to provision the necessary work from home […]

CRN Fast50 for 2019 Award

TechConnect was listed in the CRN Fast50 for 2019, settling into the 15th position in our debut year. A day after receiving the Deloitte’s Technology Fast 50 awards we were again presented a great result. Read about the Deloitte’s Technology Fast 50 here if you missed it. The CRN Fast50 award, now in its 11th […]

Deloitte – Technology Fast 50 Australia 2019

We are extremely excited and honoured to have been listed as one of Deloitte’s Technology Fast 50 Australian companies. Ranking 43rd on the list with a growth rate of 161%, in our debut year. This is a huge achievement not only for the company but also for our team and the hard work they have […]

What’s the difference between Artificial Intelligence (AI) & Machine Learning (ML)?

What’s the difference between Artificial Intelligence (AI) & Machine Learning (ML)? The field of Artificial Intelligence encompasses all efforts at imbuing computational devices with capabilities that have traditionally been viewed as requiring human-level intelligence. This includes: Chess, go and generalised game playing Planning and goal-directed behaviour in dynamic and complex environments Theorem proving, proof assistants and […]

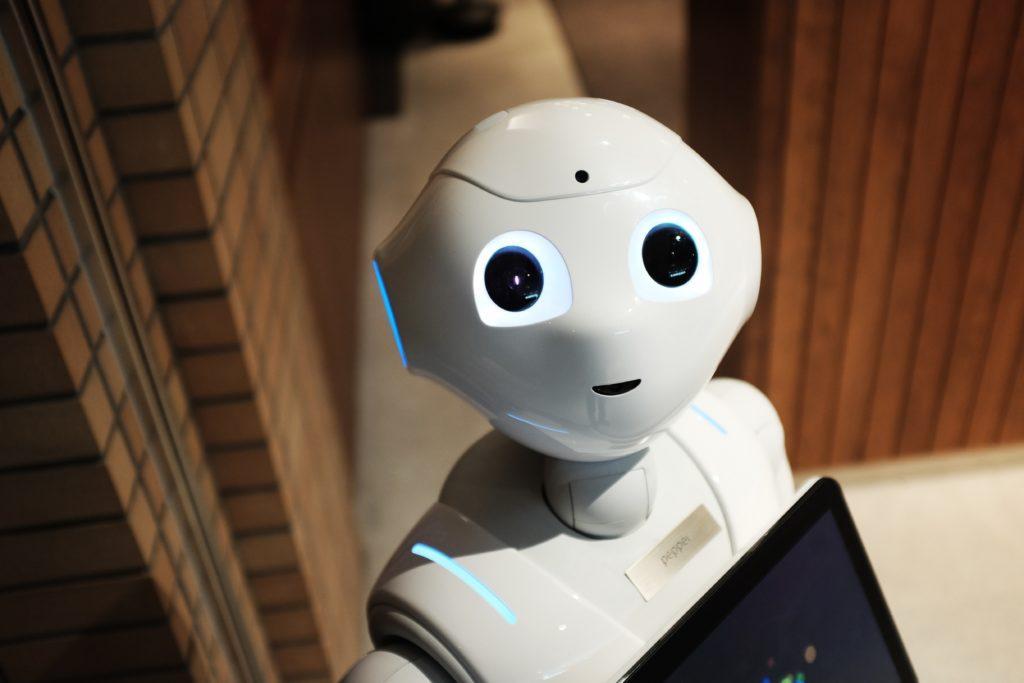

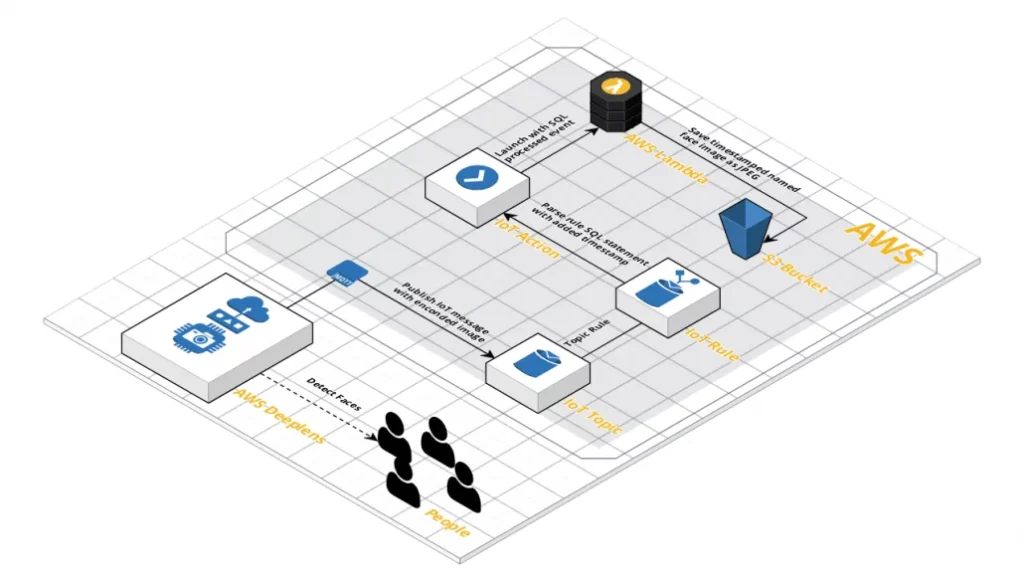

AWS DeepLens: Creating an IoT Rule (Part 2 of 2)

This post is the second in a series on getting started with the AWS DeepLens. In Part 1, we introduced a program that could detect faces and crop them by extending the boilerplate Greengrass Lambda and pre-built model provided by AWS. This focussed on the local capabilities of the device, but the DeepLens device is […]

AWS DeepLens: Getting Hands-on (Part 1 of 2)

TechConnect recently acquired two AWS DeepLens to play around with. Announced at Re:Invent 2017, the AWS DeepLens is a small Intel Atom powered Deep Learning focused device with an embedded High-Definition video camera. The DeepLens runs AWS Greengrass, allowing quick compute for local events without having to send a large amount of data for processing […]

Machine Learning with Amazon SageMaker

Computers are generally programmed to do what the developer dictates and will only behave predictably under the specified scenarios. In recent years, people are increasingly turning to computers to perform tasks that can’t be achieved with traditional programming, which previously had to be done by humans performing manual tasks. Machine Learning gives computers the ability […]

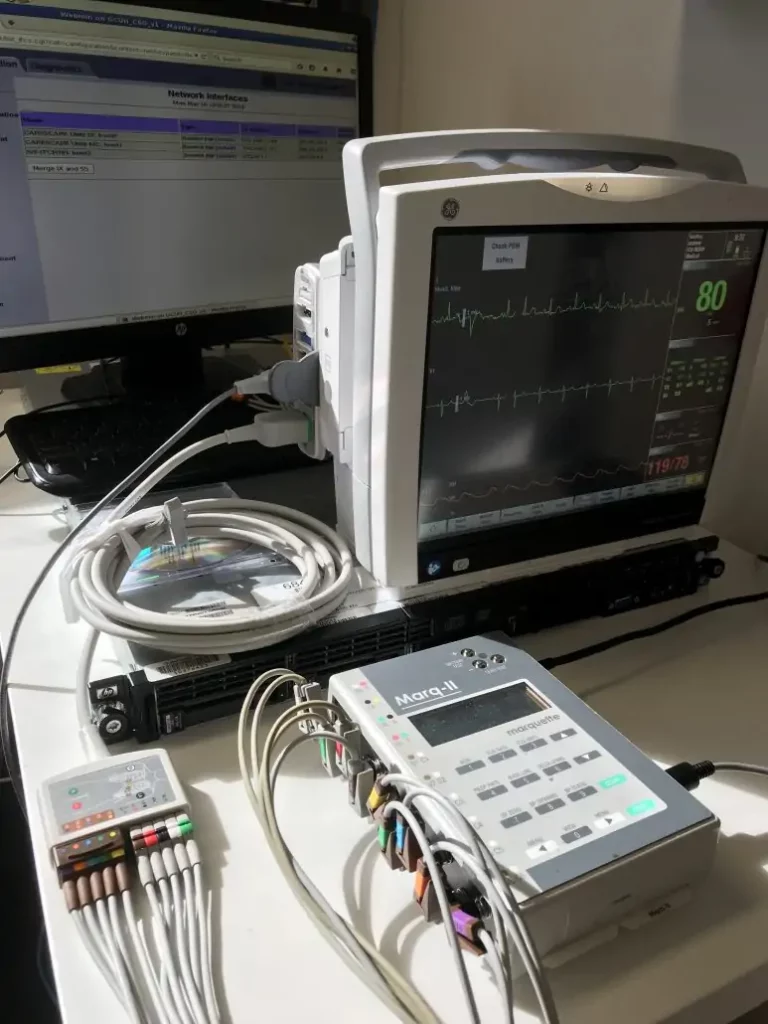

Precision Medicine Data Platform

Recently TechConnect and IntelliHQ attended the eHealth Expo 2018. IntelliHQ are specialists in Machine Learning in the health space, and are the innovators behind the development of a cloud-based precision medicine data platform. TechConnect are IntelliHQ’s cloud technology partners, and our strong relationship with Amazon Web Services and the AWS life sciences team has enabled […]